T R A I L E R | W O R L D

The Art and Craft of a Trailer Editor

Inside the Mind of a Trailer Editor: Cutting Emotion Into Seconds

Trailer Editor isn’t just a job title—it’s a mindset. It’s about seeing stories not in hours, but in beats. Seconds. Moments that spark emotion, curiosity, or chills. As a trailer editor, your job is to capture the soul of a film or series and bottle it into two minutes or less. No pressure, right?

After over 20 years working as a Trailer Editor across Hollywood and now Mumbai, I’ve come to see this craft as a mix of instinct, structure, chaos, and precision. You’re part storyteller, part DJ, part strategist. And while the job may look like "just cutting clips," what we really do is shape feeling.

The Magic Lies in the Cut

Every Trailer Editor knows the timeline is both playground and battlefield. You often begin with unfinished footage—hours of raw material—and a blinking cursor. No script. No map. Just instinct and brief notes. And then begins the hunt for the moments that hit: a charged glance, a dramatic silence, a killer line of dialogue.

Trailer editing is ruthless with time. Every frame must fight to stay. But when the pieces come together, and the music swells in just the right way, something clicks. And you know you’ve nailed it—goosebumps.

More Than Just Cool Shots

To be clear: great trailers aren’t just highlight reels. A Trailer Editor builds trailers with structure and intent. There’s an arc, a rhythm. A reason why one beat leads to the next. Sometimes it follows a three-act mini story. Sometimes it rides a wave of pure tone and energy.

What stays constant is the emotional core. As a trailer editor, you’re not just selling a film—you’re selling an experience. You want people to feel something before they even watch the actual content. Wonder, excitement, fear, laughter—whatever the tone is, it’s your job to make it land, fast.

Sound Is the Soul of the Cut

Any Trailer Editor will tell you: if picture is the body, sound is the soul. A single sound cue can change the entire energy of a cut. A silence placed just right can say more than words ever could.

Music is everything. It guides pacing. It shapes tone. And when layered with tight sound design and dialogue, it becomes a pulse. You don’t just watch a trailer—you feel it.

The Collaborator’s Cut

Being a Trailer Editor also means being a collaborator. You're often balancing input from creative directors, producers, marketing leads—and occasionally the filmmakers themselves. The challenge is to stay true to the story while serving multiple goals: tease, promote, and excite.

You might make 20 versions of one cut before landing on “the one.” But every iteration gets sharper. The pressure can be intense, but the payoff—the moment people watch your trailer and say, “I need to see this”—makes it worth it.

Final Frame

To me, being a Trailer Editor is one of the most challenging and creatively satisfying roles in the industry. You don’t just cut stories—you launch them. You help shape how the world sees a film, often before a single frame hits the screen.

It’s fast, it’s emotional, it’s high-stakes—and I wouldn’t trade it for anything.

🎬 Curious to see how I bring stories to life in under 2 minutes? Check out my latest trailer work here.

Pari Film – Teasers clock in 41 million views!

It was a great experience editing 7 short teasers for this film. The teasers alone clocked in over 41 million views.

Pari’s scary social media strategy sets a movie marketing example.

Brand Introduction

Pari, one of the most anticipated Bollywood horror films, was scheduled to hit the screens, on March 2 during the festival of Holi. Produced under the banner Clean Slate Films, owned by the leading lady of the film, Anushka Sharma, Pari was marketed through social media in order to generate maximum awareness around its release.

Summary

Pari was promoted and marketed through social media, leading up to it’s release leveraging timely and regular material from the film such as posters, ‘screamers’ – wordplay on the word ‘teasers’ due to the horror genre and many such activities.

Problem Statement/Objective

The Pari team intended to promote the film as a unique take on the horror genre, and also emphasizing on the release date of the film during the Holi weekend. Communication around the promotional material was pivoted around the message of Pari being ‘Not a usual horror film’.

Execution

Keeping in mind the genre of the film, Pari was promoted through suspenseful short teasers, termed screamers. These ‘screamers’ were created specifically for the marketing activities on social media such as ‘Growing nails’, ‘I love you too’ and more.

Beginnging the social media activity for Pari a couple months before its release, Anushka Sharma rolled out the first screamer for the film, initiating the promotional campaign of the film.

The film’s release on the festival of Holi was emphasized with the hashtag #HoliWithPari, then following up with the second screamer exactly one month before the release of the film.

The third screamer for the film, ‘I love you’, was rolled out during Valentine’s Day, creating a strange and scary perception around the film, following up with screamer 4 on 19th February, slowly gaining momentum as the film was nearing it’s release date.

Exclusive posters and images were posted on social media in order to sustain the momentum.

Launching another poster, the Pari team made sure they sustained the conversation and intrigue on social media while at the same time, successfully establishing a memory in the minds of users that the film was releasing on Holi.

On Twitter, Pari engaged audiences by sending out creepy replies with GIFs from the film through Clean Slate Films and Anushka Sharma’s accounts, surprising the audience and ensuring maximum engagement.

Additionally, an automated Messenger bot for the film was leveraged, sending out important information to Messenger users, along with an interesting new AR based Face Filter, urging audiences to share their pictures for a chance to win movie tickets for Pari.

Screamers 5, 6, and 7 were rolled out at different times before the release of the film with the 7th one being posted just one day before the film released in order to create maximum impact.

One of the first Bollywood films to use the new Press and Hold Format posts on Facebook, Pari put a scare in social media users by putting up an endearing image as the thumbnail, but revealing a scary image upon interaction.

As a part of the offline activities that were amplified digitally, the Pari team conducted a series of pranks at a theatre in Mumbai, promoting them on social media.

Countdown posts were rolled out on social media to create recall in the minds of social media users.

Post the release of the film, positive reviews were collated and a Twitter Moment was created out of it, also executing a similar activity on Instagram with 9 reviews.

Results

The total views received by the 7 screamers posted at regular intervals by the Pari team were clocked at over 41.4 Million.

The Pari trailer grossed over 17 Million views, with the overall promotional campaign for the film generating 1.4 Million Impressions on Twitter, with 22941 of engagement and 18955 mentions.

On Facebook, Pari received 85 Million Impressions, with 268K Engagement and a Reach of over 78 Million, whereas on Instagram, Pari managed to clock in 1.3 Million Impressions, 90K in Engagement and a Reach of over 1.2 Million.

Pari’s scary social media strategy sets a movie marketing example

Workflow Breakdown of Every Best Picture and Best Editing Oscar Nominee

A Great Write-up About Workflow Breakdown of Every Best Picture and Best Editing Oscar Nominee

Written byMarch 5, 2018

So we reached out to the editorial teams on all 9 Best Picture nominees and all 5 Best Editing nominees, and got the details of their technical workflows. There were 11 films in all, since three films were nominated for both categories. (The Post’s editorial team was unfortunately unavailable, so we relied on second-hand reports for that film)

- Baby Driver

- Call Me By Your Name

- Darkest Hour

- Dunkirk

- Get Out

- I, Tonya

- Lady Bird

- Phantom Thread

- The Post

- The Shape of Water

- Three Billboards Outside Ebbing, Missouri

We could have written an article on each of these films, but rather than diving deep into a particular one, we’re presenting an overview, highlighting some of the most interesting elements.

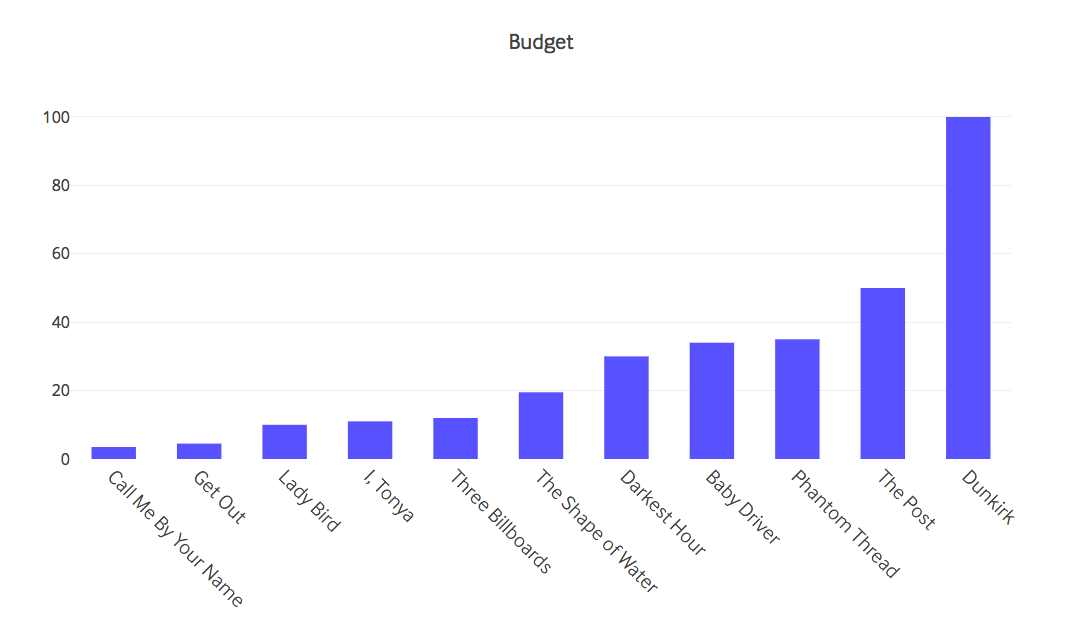

Budget

This is not an article about the funding of Hollywood films, but I think that it’s helpful to keep the relative budgets in mind as we look at things like the length of principal photography in the size of the editorial team.

Here’s a look at the budgets for each of these films, from smallest to largest. It’s quite extraordinary how diverse the budgets are on this list of Oscar nominees, from $3.5 million on Call Me By Your Name all the way up to $100 million on Dunkirk.

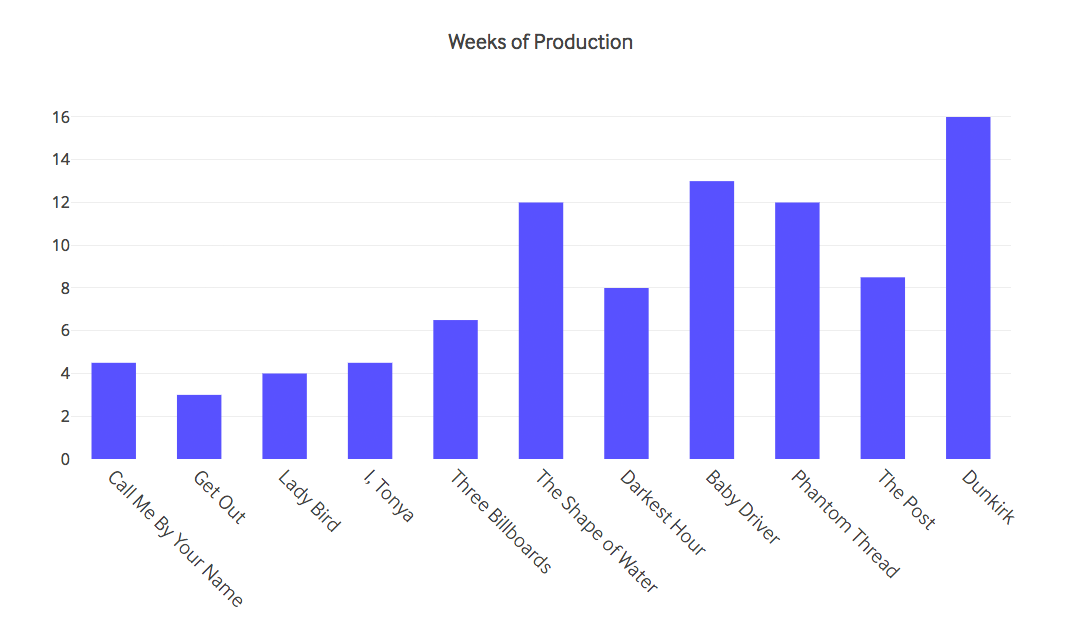

Production Schedule

There’s a general trend up and to the right again, with a few exceptions. Production is always the most expensive phase of a feature film, and so it’s not surprising that the lower-budget films generally had tighter shooting schedules. What’s rather shockingly impressive is that the Get Out team shot a four-Oscar-nominated film in just three weeks!

There’s a general trend up and to the right again, with a few exceptions. Production is always the most expensive phase of a feature film, and so it’s not surprising that the lower-budget films generally had tighter shooting schedules. What’s rather shockingly impressive is that the Get Out team shot a four-Oscar-nominated film in just three weeks!

The Post’s shooting schedule might have been longer except that the film was made in a very short amount of time. Steven Spielberg wanted badly to make and release The Post quickly, as soon as he read the script, because he felt that it was an important piece of social commentary for our time. The film was finished less than 9 months after Steven first read the script. That kind of schedule means every phase of the film has to be rushed.

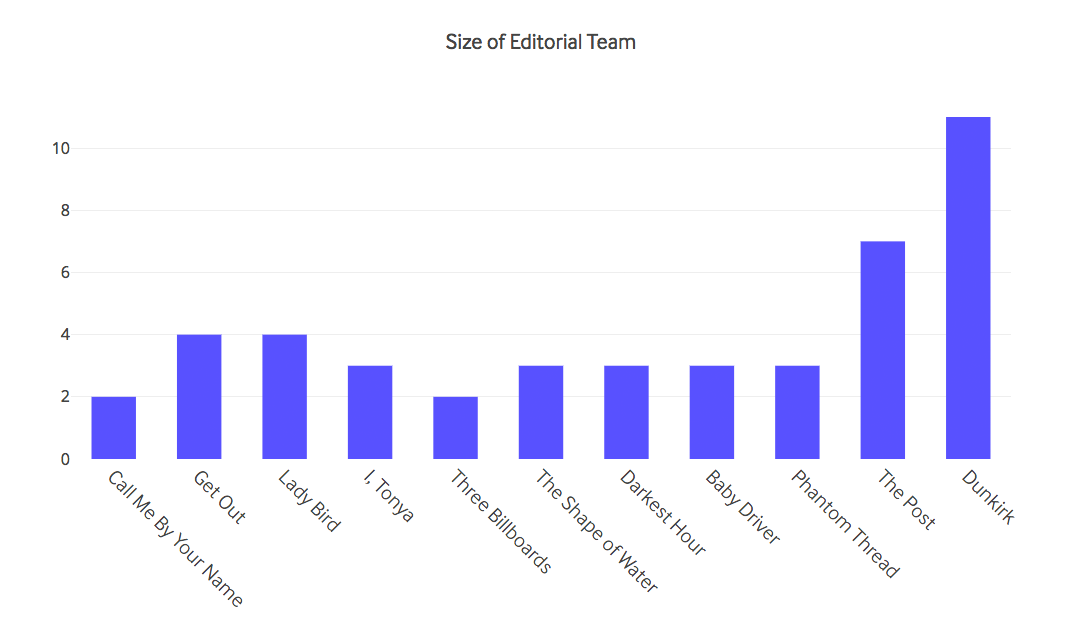

Editorial Team

Keeping the films in the same order again, let’s compare the sizes of the editorial teams. I excluded post supervisors and VFX editors, keeping this list to editors, assistant editors, associate editors, and editorial assistants.

While it’s certainly true that the two most expensive films had the largest teams, the rest of the nominated films needed no more than 4 on the editorial team. A large part of Dunkirk‘s huge editorial team was needed for the various conform processes (keep reading), but even without that, it had a significantly larger team than any other film on our list.

While it’s certainly true that the two most expensive films had the largest teams, the rest of the nominated films needed no more than 4 on the editorial team. A large part of Dunkirk‘s huge editorial team was needed for the various conform processes (keep reading), but even without that, it had a significantly larger team than any other film on our list.

The Post also had a significant added challenge—they were cutting two films at once! When Steven Spielberg decided to fast-track The Post, he was already in the midst of postproduction on Ready Player One, which couldn’t simply be put on hold for a year. Michael Kahn and Sarah Broshar, the two editors of The Post, thus had to be able to switch from one film to the other on a daily basis. So, I think we can probably cut them a little extra slack.

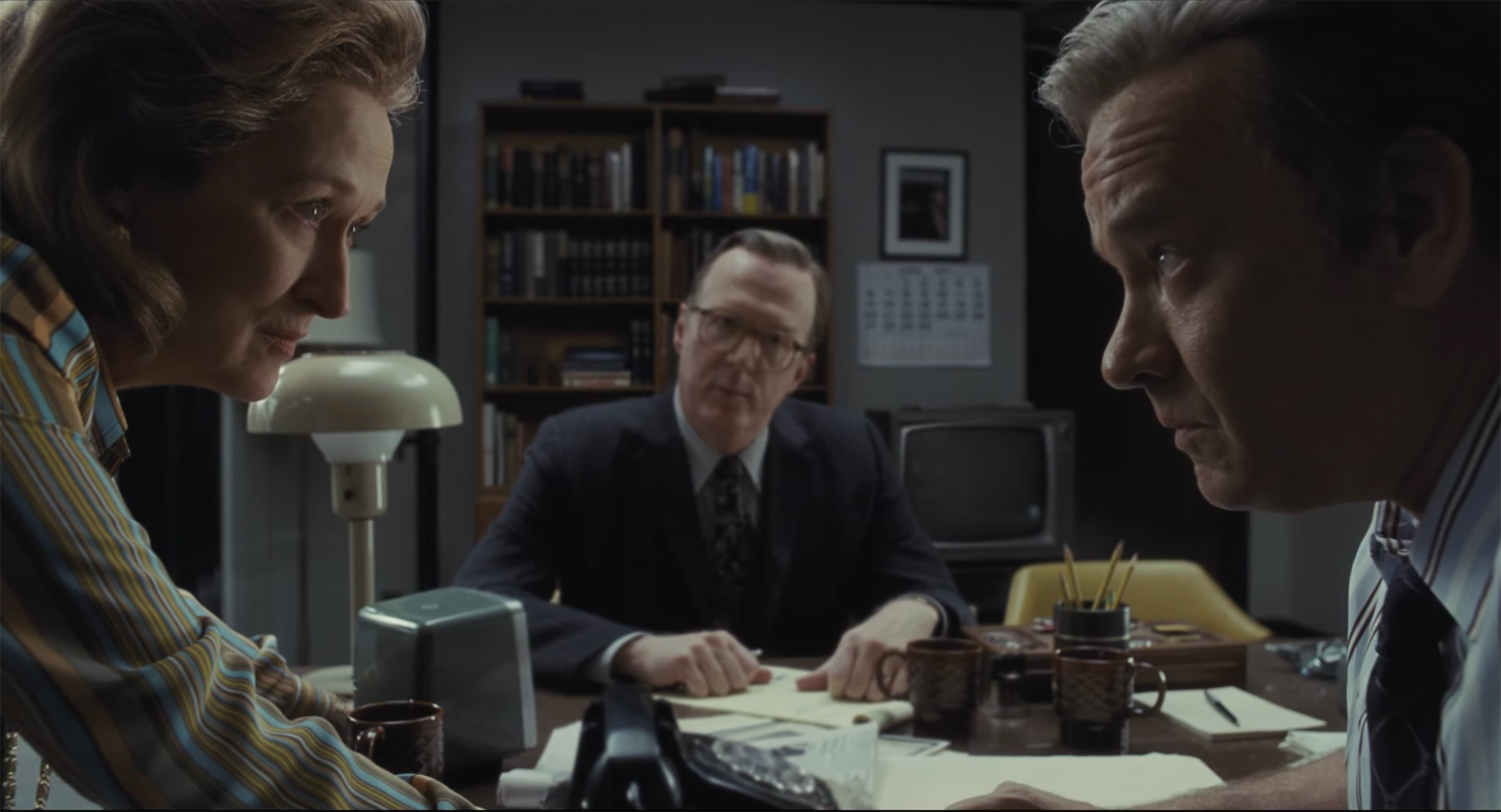

The Post © 20th Century Fox

I, Tonya’s team had the difficulty of a small budget but over 200 tricky VFX shots. Although Margot Robbie trained hard, a professional skater had to complete the most difficult moves, requiring various tracking techniques to place Margot back into the film, in addition to CG crowds and stadiums. I, Tonya’s small independent budget meant that Steve Jacks had to pull double-duty as first assistant editor and VFX editor (he is credited for both titles). He would be cutting in VFX shots in the morning, then in the afternoon he’d switch to doing sound work, and then he’d sometimes stay late to prep shots to turn over to VFX.

In contrast, Three Billboards’s workflow was so simple that, even with a two-man editing team through most of production, the first assistant editor Nicholas Lipari spent very little time on the technical details. On a given day, he’d spend around two hours ingesting and processing footage, and then he would be able to spend the rest of the day working with the editor Jon Gregory, which is quite an unusual scenario. It’s an unfortunate fact that the duties of the assistant editor are often so different from the duties of the editor that an assistant can perform their job well for years without getting the skills necessary to move up to an editing position.

(Do you agree with that? Disagree? Let us know in the comments or email blog(at)frame.io—we’re preparing an article on that topic.)

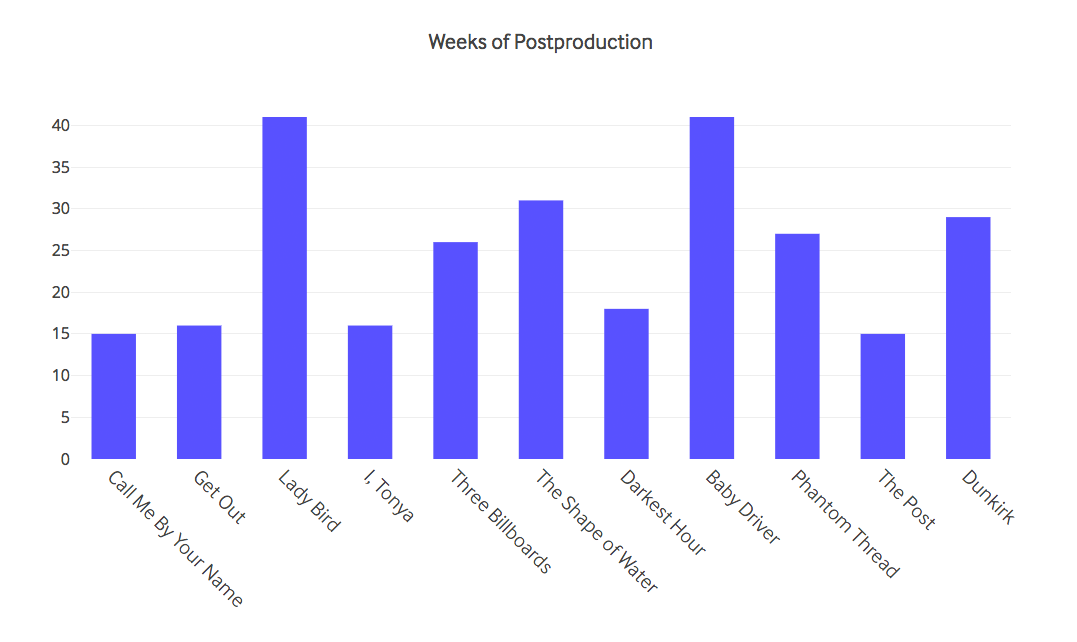

Postproduction Schedule

You might think that large productions like Dunkirk and The Post would have the luxury of spending as much time in the edit as they need, but the reality is that amount of time allocated to postproduction doesn’t correlate very well with budget. I’ve kept the films in order of budget from left to right, and there isn’t a discernible trend at all. Note that I am including only the time spent in postproduction after the end of principal photography, although of course these teams were all hard at work producing assembly edits while the films were being shot.

On studio films, the release date is usually set before they even begin shooting, and the process of jockeying for prime release dates means that a studio film can end up with plenty of time for post, or very little.

On studio films, the release date is usually set before they even begin shooting, and the process of jockeying for prime release dates means that a studio film can end up with plenty of time for post, or very little.

The smaller independent films, like Three Billboards and Lady Bird, had a lot more freedom to take as much time as needed in the edit. Even small independent films do sometimes have to hit deadlines of course, which is often a particular film festival that they’re targeting. It’s interesting to note, though, that even though the independent films generally reported having much more flexibility in their postproduction schedule, they didn’t necessarily take the longest.

Sam Rockwell and Frances McDormand, 2018 Best Supporting Actor and Best Actress winners for Three Billboards Outside Ebbing, Missouri. © Fox Searchlight Pictures

In the case of this year’s nominees, a number of different factors dictated the amount of time in post: technical complexity of the edit (Baby Driver and Dunkirk), pressing release dates (The Post), complex VFX (The Shape of Water), with several taking whatever time they felt they needed (Three Billboards, Lady Bird, Call Me By Your Name).

Hardware

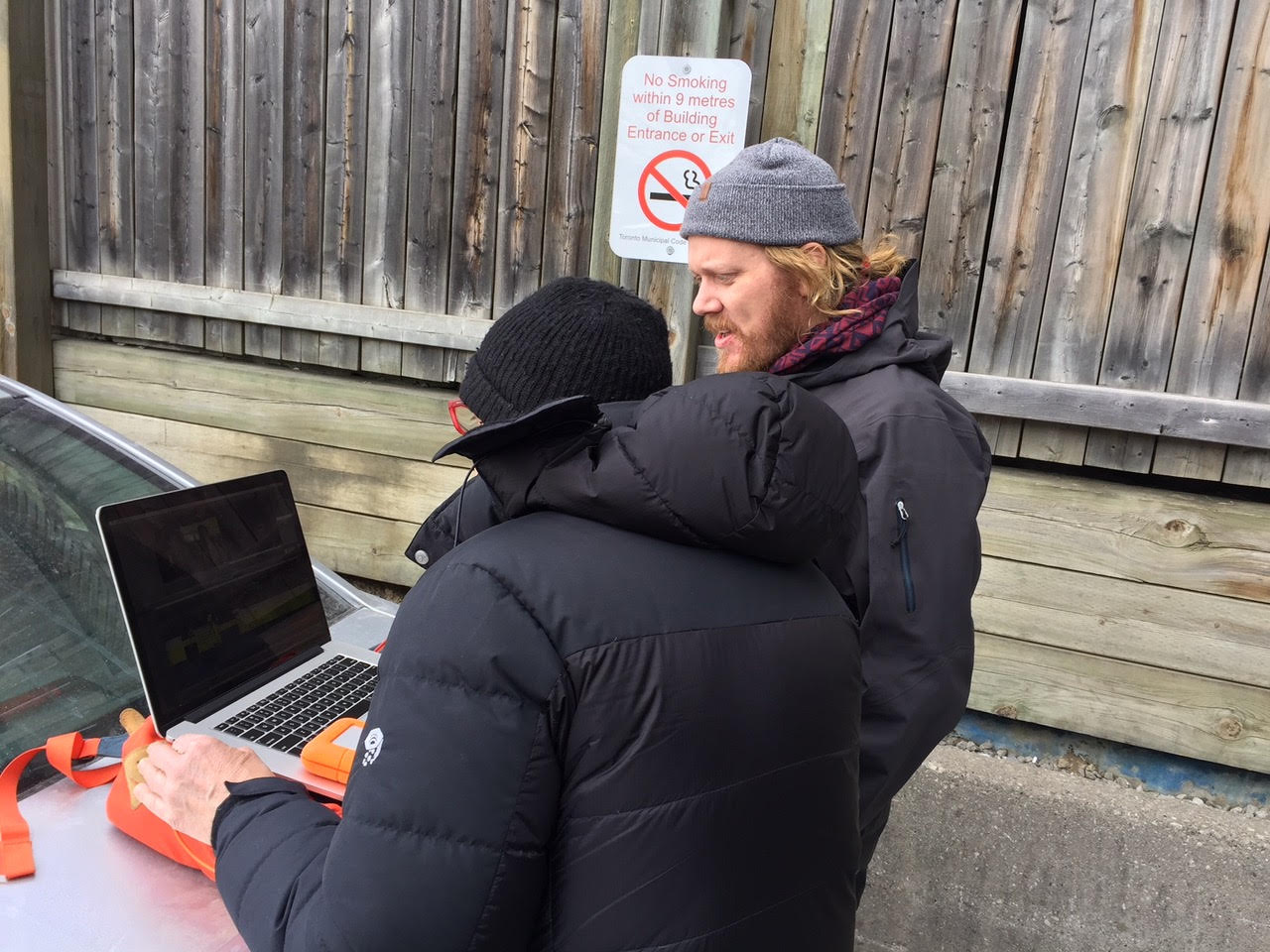

Given the huge range of budgets for these films, it’s a testament to the amazing democratization of the tools of postproduction that these films were cut on similar hardware. In fact, many of these films were cut on laptops from portable hard drives, as well as in full edit suites.

Tweaks to The Shape of Water in a parking lot, photo courtesy of Doug Wilkinson.

It will come as no surprise that Apple’s hardware dominated the list, with most films editing off of Mac Pro “trash cans” in the main edit suites (the new iMac Pros were not available when these films were being edited). Call Me By Your Name’s team opted for top-of-the-line iMacs, and some will be surprised that Darkest Hour and The Shape of Water both used old Mac Pro towers, though The Shape of Water actually moved to Mac Pro trash cans in the middle of the shoot. Old habits die hard.

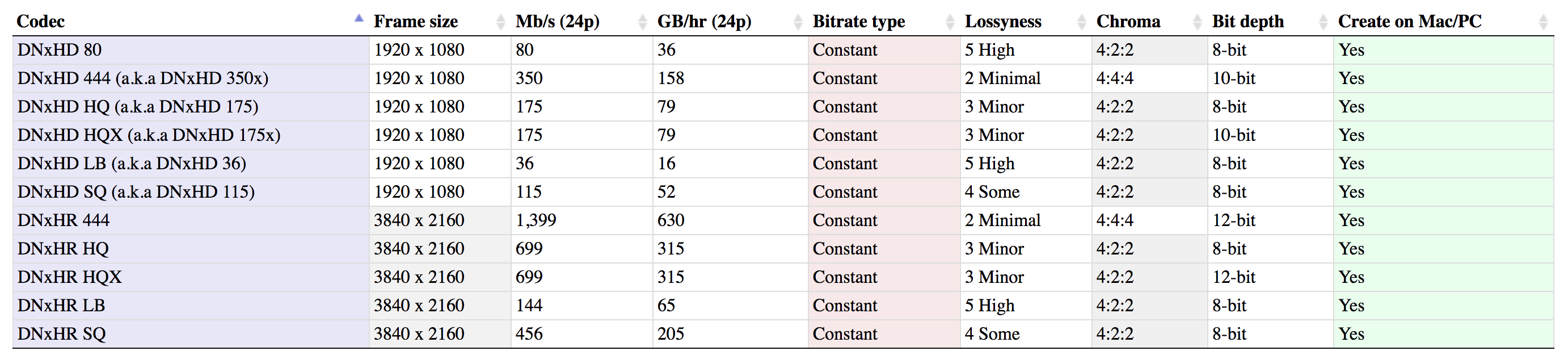

The Edit: Still Standard HD

Given Avid’s dominance of the feature film market, it’s a given that each of these films was edited using Avid’s DNx family of codecs, most using the DNx 115 flavor, though four films (Baby Driver, Lady Bird, Call Me By Your Name, and The Shape of Water) used the DNx 36 flavor. If you’re more used to the ProRes family, DNx 36 is comparable to ProRes Proxy, while DNx 115 is comparable to ProRes 422 (you can see a comparison table here).

Comparison of DNx codecs. You can find the full chart here.

Although it’s becoming easier and easier to edit in 4K (and these productions certainly had the budgets for high-end computers), all 11 teams chose to edit in standard 1920 x 1080 resolution. For readers who are used to working on smaller productions where the entire postproduction process happens at the same facility, this might be surprising. These films were all captured or scanned at 4K or higher, and the budgets were plenty large enough to pay for top-of-the-line hardware, so why not edit 4K? If people are editing feature films in raw 6K, surely it can be done in 4K without too much trouble.

Why Not 4K?

If you consider the workflows of these types of films, though, a 4K edit still doesn’t make much sense. Before we ask why these films wouldn’t edit in 4K, we first we have to ask ourselves, “what are the benefits of editing in 4K?”

The primary benefit for most people who edit in 4K is simply the fact that they can skip the offline editing process and do all of their work directly on the camera-original files. Although it’s very easy to design a smooth offline workflow, and all of the major editing packages support it, there is some nice simplicity in avoiding the need to transcode for an offline workflow. If you are doing your color correction and finishing inside of your editor (which is increasingly possible), you have the added significant advantage of being able to move fluidly between phases of your postproduction process. You can spend more time on temporary color-correction as you are editing, knowing that you can continue that work later rather than having to start over again. You can also make edits to the film during the finishing phase, without the headaches of a reverse conform process.

But these feature films, even the low budget ones, all used a traditional offline workflow that involved a handoff from the editors to a separate finishing team at another facility. So there was no possibility of the kind of all-in-one workflows that are now becoming feasible.

As amazing as the iMac Pros and Z840s are for renderless 4K editing, there are still plenty of hiccups and slow-downs involved with editing a significant film in 4K. Temp VFX and color-correction can quickly choke playback on a system that’s not perfectly tuned, and render times stretch out.

The other significant disadvantage to a 4K edit for these films is the size of the storage required, even if you edit off of proxy files instead of the original camera files. At different points in the process, the teams behind Baby Driver, Three Billboards, and The Shape of Water had a complete copy of the film running off of a single hard drive connected to a portable laptop, which would not have been possible had they edited in 4K.

Baby Driver editor Paul Machliss’s laptop and Lacie hard drive on set. Image courtesy Paul Machliss.

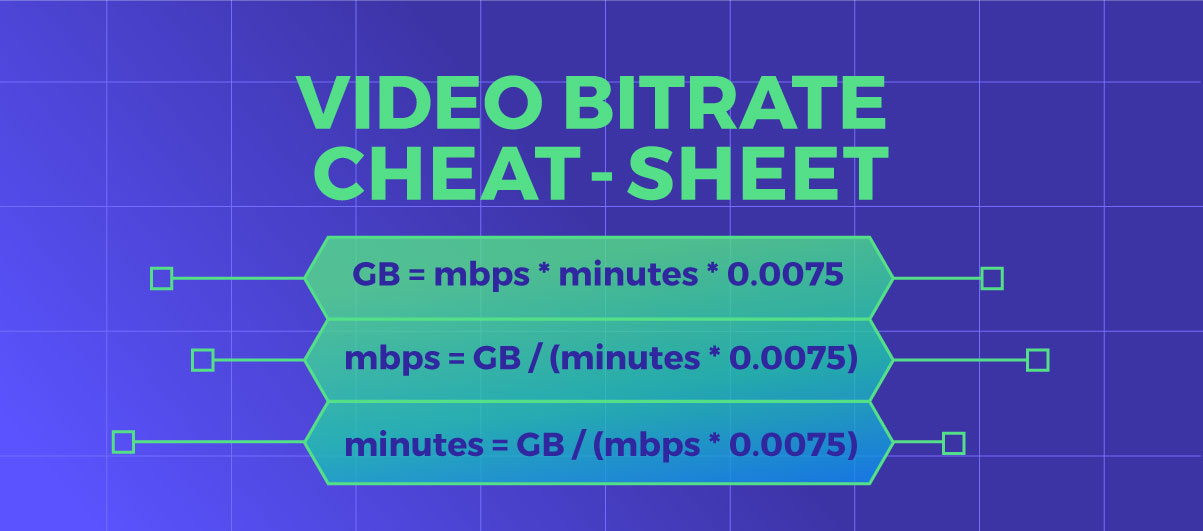

A 2-hour feature film might easily have a 50:1 shooting ratio (capturing 50x as much footage as ends up in the final film), which means 6,000 minutes of recorded footage. Using our bitrate formulas, we can quickly calculate the space required.

ProRes 422 at UHD is 503mbps, so we plug our numbers into the top formula:

ProRes 422 at UHD is 503mbps, so we plug our numbers into the top formula:

503mbps * 6,000 minutes * .0075 = 22,635GB, or 22.5TB.

22TB is reasonable to store in a powered RAID, but you’re going to have trouble throwing that in your backpack.

So, if you can’t take advantage of the primary benefits of a 4K workflow, and it will slow you down and hamper you, it just doesn’t make any sense to use cutting-edge 4K workflows purely for their own sake. Even if you’re cutting Dunkirk.

The one benefit that these productions would have received from a 4K edit is simply the greater resolution and detail during the editing process. That can be an advantage, but just not a big enough advantage to tempt these teams …yet.

Note that I’m specifically talking about editing in 4K vs 1080p, which is a separate question from whether to capture and master at 4K or 1080p. All of these films used a traditional offline workflow, which allowed them to edit in 1080p but produce a final output at a higher resolution.

Film vs Digital Acquisition

The decision to shoot on film vs. digital is always a fraught one. It can be a question of budget, a question of personal taste, or a question of subject matter. All of the films on this list had enough of a budget to consider film, but it’s interesting to note that, of the six movies shot on film (Baby Driver, I, Tonya, Call Me By Your Name, The Post, Phantom Thread, and Dunkirk), five of them were period pieces of some sort. Films set in the past tend to shoot on film more often than films set in the present because we tend to associate the look of older film stocks with the past.

Of course, it’s always possible to emulate the look of an older film stock with a digital image, but I’m trying not to take sides too much here

The only non-period-piece to use film was Baby Driver, which is even more surprising given the fact that the film’s synchronization to its soundtrack required real-time, on-set editing (more on that later). Shooting digitally could have simplified this workflow, but Edgar Wright is a film purist, and it’s pretty hard to argue with a director like Edgar Wright.

- Baby Driver: 35mm, Alexa Mini at 2.8K ARRIRAW.

- Call Me By Your Name: 35mm.

- Darkest Hour: Alexa SXT, Alexa Mini at 3.4K ARRIRAW.

- Dunkirk: IMAX 65mm and Standard 65mm film.

- Get Out: Alexa Mini at 3.2K ProRes 4444.

- I, Tonya: 35mm, Alexa 65 at 6.5K ARRIRAW, Phantom.

- Lady Bird: Alexa Mini at 2K ProRes 4444.

- Phantom Thread: 35mm film.

- The Post: 35mm film.

- The Shape of Water: Alexa Mini at 3.2K ARRIRAW.

- Three Billboards Outside Ebbing, Missouri: Alexa XT Plus at 2K ARRIRAW, Ursa Mini.

Of these 11 films, Dunkirk was the only film to do an optical print with no digital intermediate. This was again a personal aesthetic choice by Christopher Nolan to avoid the digital color tools and restrict the color treatment to the limited tools of the traditional photochemical color-timing process.

The five other films that shot on physical film all scanned the images and did their color correction and online effects on digital files. They then exported DCPs (digital files) so that the films could be projected digitally, or else printed those digital files back onto film for a film projection (Phantom Thread). Dunkirk, instead, was projected on film using prints made from the original camera negatives where possible.

There are several caveats, however. Dunkirk still did a digital scan of the camera negatives, for several different reasons. In spite of his love of the physical film, not even Christopher Nolan can deny the obvious advantages of digital editing, and so the film had to be scanned and transcoded to a 1080p digital intermediate codec for the editing. Second, although Christopher Nolan tried to do as many effects in-camera as possible, it was still necessary to do some digital VFX on certain scenes. Those scenes were scanned at 8K and delivered to the visual effects house, which worked at 6K. Those 6K files were then printed back to film negative and spliced in with the prints that had come from camera negatives.

Dunkirk. © Warner Bros.

There was also yet a third complete scan in order to provide digital delivery as well, since many theaters have moved to digital projection only. That scan was done after the film had been color-timed photochemically, though, so there was no need to do any digital color manipulation other than what was necessary to match the look of the digital files as closely as possible to the look of the all-analog version.

(I’ve barely scratched the surface of the extraordinarily complicated workflow on Dunkirk. I recommend this article from the ASC and this interview with Steve Hullfish if you’d like to dive deeper.)

Dailies

I mentioned above that every film used Avid’s DNx offline codecs for editorial, although a couple of these films added a few extra twists to the process.

Most of these films followed a fairly standard dailies process. For those films that shot on 35mm, the negatives were immediately scanned to digital files, and then shown to the production team digitally, either by sending a physical hard drive or via a remote dailies viewing system. For the films that shot digitally, the files were transcoded and then delivered to the production team via hard drive or via the cloud. Processing dailies is never “simple”—color needs to be properly managed, sound synced, and metadata carefully managed—but the process is fairly standardized.

Two of these films had particularly complicated dailies workflows, though for completely different reasons.

For Baby Driver, the challenge came from the fact that the film was timed extremely precisely to the soundtrack. The main character is constantly listening to music on his earbuds throughout the film, and every scene is precisely choreographed to fit the music. Every shot had to be timed in order to match this soundtrack, which was piped to the actors via hidden earpieces.

The cast of Baby Driver wore earpieces in order to move in sync to the music.

That obviously presented as a huge synchronization challenge, which they addressed by referring to detailed animatics, matching the live action footage, shot by shot, to the pre-edited animatics. In order to be sure that they were hitting their beats precisely correctly, it was necessary to edit the film in real-time, dropping each take into the animatic timeline to make sure that everything lined up as planned.

Since the film was shot on 35mm film, they weren’t able to use the “real” dailies to do this on-set check. Instead, the film’s editor Paul Machliss received a signal from video village, which was captured in real time using Qtake’s video assist software. Qtake recorded ProRes files, which could be imported into Avid on Paul’s Macbook Pro, but Paul would then transcode those files in the background to DNxHD 36, stored on an external hard drive.

So Paul was building his assembly edits with these temporary dailies, but he was also receiving the dailies that have been scanned from the captured 35mm negatives. Since his temporary dailies were lower quality, he swapped out the 35mm scans as soon as they arrived. Of course, it takes a few days for negatives to be mailed from Atlanta to Los Angeles, scanned, processed, and then sent back to Atlanta, Paul’s on-set assistant had to swap out temporary dailies for “real” 35mm-scanned dailies, on a daily basis. (that was a lot of dailies)

Since these temporary dailies were coming from a video tap which recorded independently from the film camera, the clips coming in from the 35mm scans didn’t match the temporary dailies precisely. So the new dailies would have to be carefully linked back into the Avid timelines, replacing the temporary dailies without losing sync. Fortunately, reliable time-of-day timecode saved the day, allowing much of the relinking to happen smoothly.

(edit: we earlier reported that the temporary dailies came in at 25fps. That was incorrect: Paul had to work with proxies from a 25fps video tap on The World’s End, but not on Baby Driver. We regret the error.)

Dunkirk’s dailies workflow was even more complicated than Baby Driver’s workflow, though without the real-time component. All of the complications in Dunkirk‘s workflow were due to the fact that the production was shooting simultaneously in two different non-standard types of physical film, and Christopher Nolan wanted to view his dailies projected by a real film projector using a third non-standard type of film.

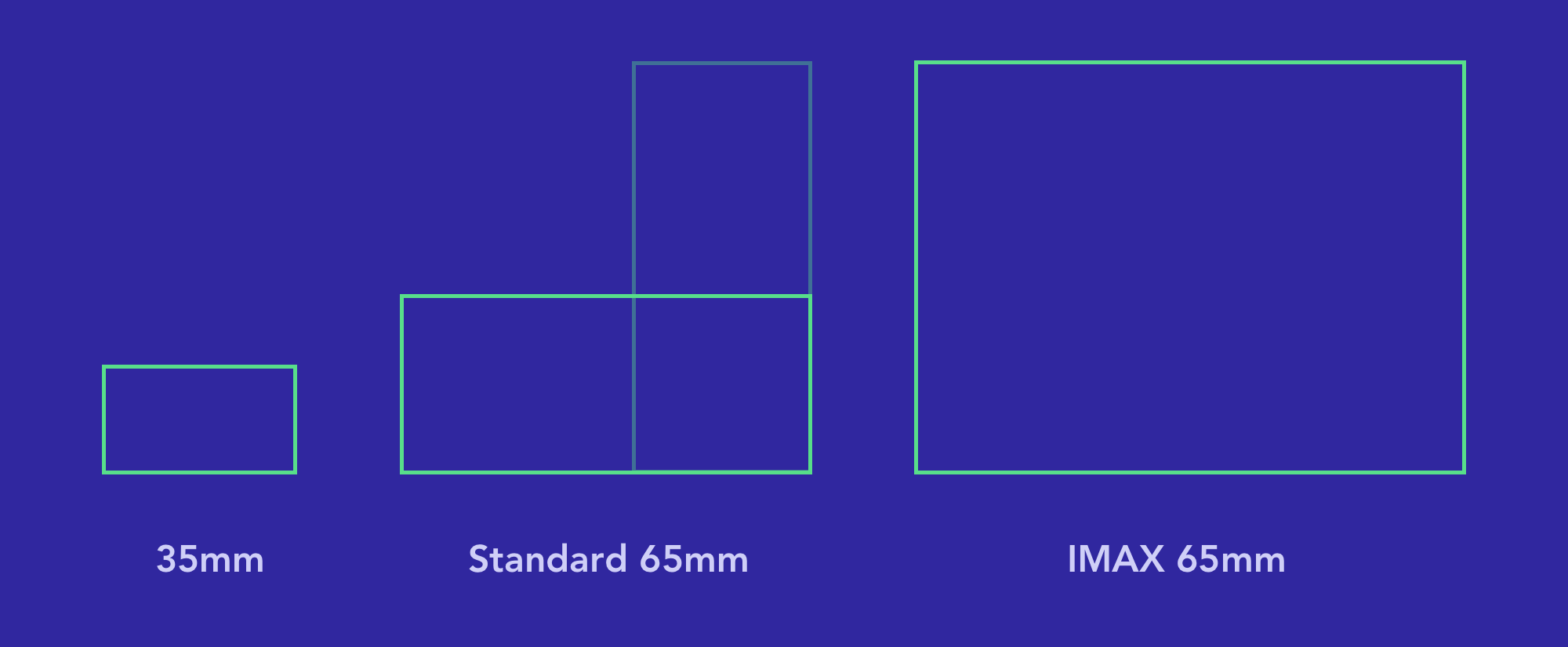

In the days before digital capture and digital intermediate, everyone had to view their dailies on film—that element was just a throwback to the old days, nothing particularly new. The tricky part was that Christopher Nolan wanted to shoot part of the film on IMAX 65mm, part of the film on standard 65mm, and to view his dailies at IMAX sizes.

In spite of the similar-sounding names, IMAX 65mm and standard 65mm have different sizes and different aspect ratios. The names are misleading because IMAX 65mm is 65mm tall, while standard 65mm is 65mm wide, resulting in two completely different types of film being known by the same number.

Standard 65mm film is as wide as IMAX 65mm film is tall.

Incidentally, this is exactly the same reason why 35mm cinema film and 35mm still camera film are not the same size or shape. 35mm cinema film is measured by the horizontal side, whereas 35mm still camera film is measured by the vertical side.

Also, you may have heard that the film was projected at IMAX 70mm, not IMAX 65mm. This is another issue of confusing terminology. The image is exactly the same size in both cases—the extra 5mm is used by the soundtrack during projection. So you always capture IMAX at 65mm and display it at 70mm, but the image is not scaled or cropped in the process. Same thing with standard 65mm film—it’s projected at standard 70mm (not IMAX 70mm).

Yeah, confusing.

Ok, back to Dunkirk. Christopher Nolan wanted to view his dailies on physical film, and since he was shooting two different formats, he received dailies in two different formats. All of the standard 65mm shots were printed to standard 70mm, requiring the production team to carry around a 70mm projector with them. Given the amount of material shot, it was simply too expensive to create IMAX prints of all of the IMAX footage. Only selected shots were reviewed at IMAX 70mm—most were reduced down to fit onto a standard 35mm print.

Because of the time required to process the 35mm reductions, they had to skip syncing sound on the all of the IMAX dailies. Fortunately, all of the dialogue scenes were shot on standard 65mm, and so they were able to view dailies with sound on the dialogue scenes.

The dailies on The Shape of Water were much simpler technically, but they had to keep a very tight ship because the editorial team worked at the studio where the film was mostly shot. Director and co-writer Guillermo Del Toro would drop in frequently, usually during the lunch break, which meant that the assistants had to work very quickly in order to ingest the previous day’s footage, allowing the editor Sidney Wolinsky time to work with the footage and have something to show Guillermo by lunchtime.

Distributed Workflows

There aren’t many film labs capable of handling all of the complexities of Dunkirk’s workflows (and I’ve skipped over several other tricky bits), so they had to ship all of the film from Europe, where they shot most of the film, to LA where IMAX and FotoKem processed dailies, mailing copies back to Europe for the production team. With all of that turnaround time, dailies took around a week to return to production. (Can you still call them dailies if they take a week? Not sure.)

Call Me By Your Name’s team was also distributed around the world, but where Dunkirk’s workflow was complex, theirs was simple, and where Dunkirk’s workflow was simple, theirs was complex.

Dailies were much simpler for Call Me By Your Name since they were working with standard 35mm film. They did have to send dailies from Crema in northern Italy to Rome for development and scanning, but the workflow remained entirely digital from that point on, greatly facilitating their distributed postproduction workflow.

Call Me By Your Name © Sony Pictures Classics

The editorial team remained in Crema, but the rest of the postproduction was scattered across the world. The color grading was done remotely in Thailand, the sound mixing was done in France, the VFX and Sound Design in Rome, and the ADR in LA. The director Luca Guadagnino was even able to direct ADR sessions in LA from a suite in Italy, syncing their Pro Tools sessions so that the director and editor were able to listen in live as the actors delivered their performances in LA, viewing the video correctly synced, and give direction as though they were in the same room.

Get Out’s team was much less spread out than Call Me By Your Name’s team, but they still made use of remote collaboration tools, as did many of the films on our list. Matthew Poliquin at Ingenuity Studios, which produced Get Out’s (mostly invisible) VFX used Frame.io’s cloud-based review and feedback tools even for internal communication, within the same building.

While it’s hard to argue against the benefits of having the director and editor in the same room for the editorial process on a feature film, cloud platforms like Frame.io make it easier to communicate and provide feedback asynchronously. That’s especially true for someone like Matthew working on VFX, who needs to coordinate feedback for many artists working separately, using Frame.io as a single, central platform to track feedback on various drafts of each shot.

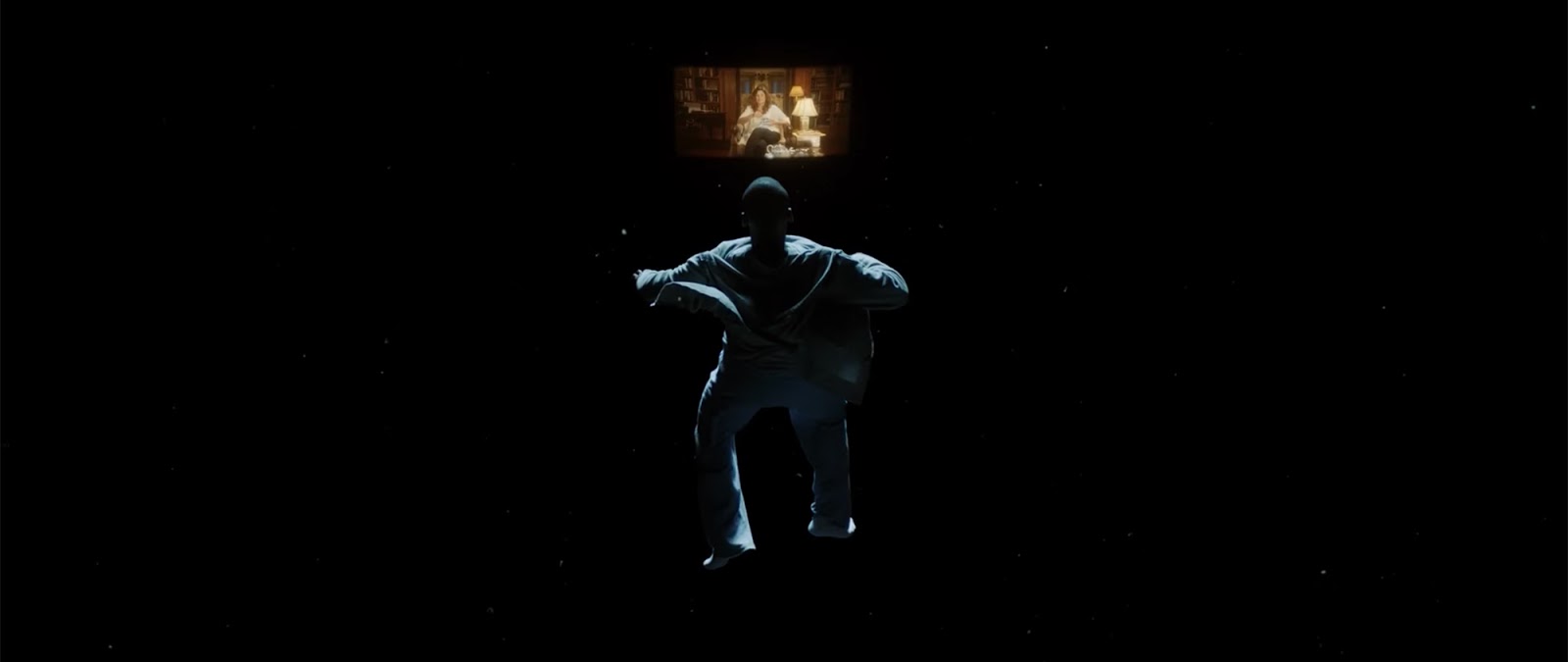

The “Sunken Place” scene in Get Out was one of the most challenging for the VFX team at Ingenuity Studios. Find out why.

What Really Matters

As fulfilling and exciting as it may be to learn about the workflows behind these amazing Oscar-nominated films, at the end of the day, it’s not about the awards. Christopher Nolan doesn’t watch IMAX-sized dailies and Edgar Wright doesn’t meticulously choreograph car chases to music to win an award. I’m sure everyone we talked to on this list (and their collaborators and counterparts on the films not nominated) would all say the same thing—they do what they do for the love of the craft and the pursuit of excellence. That pursuit is what drives every passionate artist. It’s what drives us to create amazing software (or coordinate dozens of interviews with hard-to-reach professionals to bring you the best possible blog.) And it’s our sincere desire that this information will inspire you to do the same. And if you happen to win an Oscar along the way, that’s just icing on the cake.

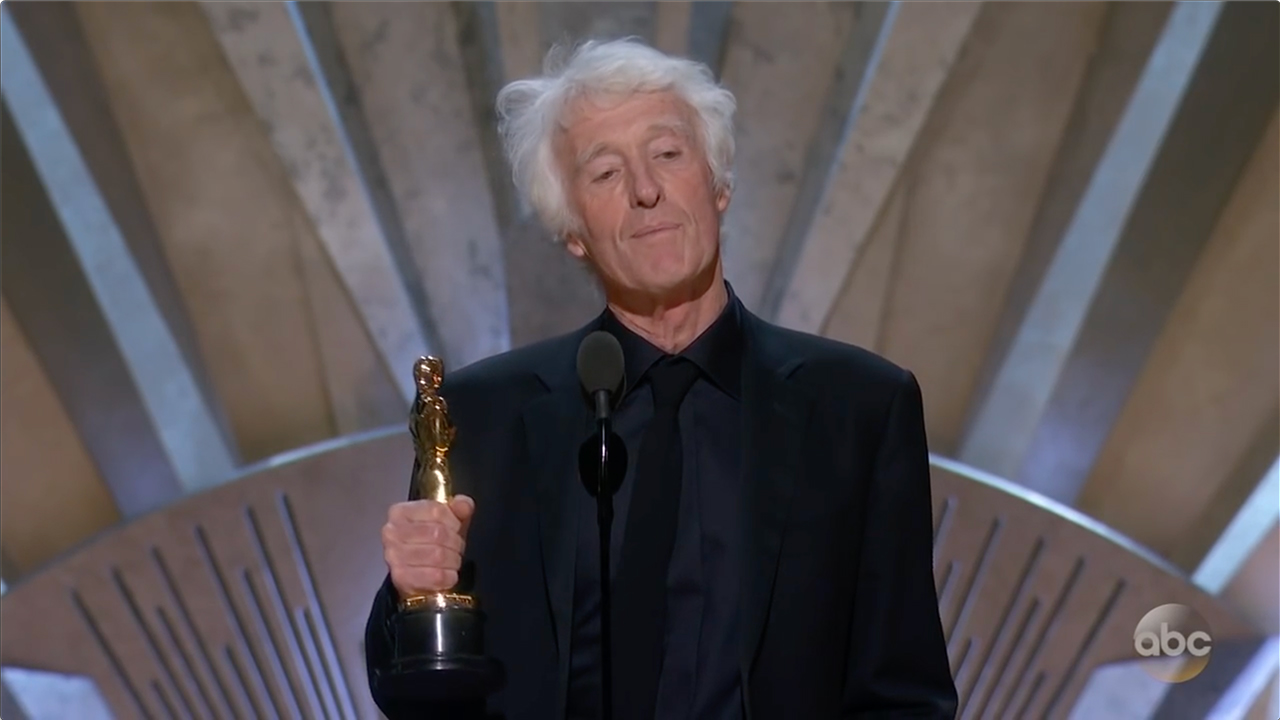

14th time’s a charm! Imagine if Deakins was only in it for the awards. He finally gets best cinematography!

Acknowledgments

Thank you very much to the busy people who took the time to answer our questions about these films: Paul Machliss, Steve Jacks, Tommaso Gallone, Francesca Addonizio, Mary Juric, Chema Gomez, Doug Wilkinson, Cam McLauchlin, Trevor Lindborg, Nicholas Lipari, Francesca Addonizio, John Lee, Crystal Platas, Nick Ramirez, and Matthew Poliquin.

14 Secrets of Movie Trailer Editors

An interesting read from Metal Floss BY JAKE ROSSEN.

http://mentalfloss.com/article/91351/14-secrets-movie-trailer-editors

JANUARY 26, 2017

Decades ago, Hollywood used to put previews of their coming attractions after the conclusion of their theatrical releases. The teasers earned the nickname “trailers” because they followed the feature film.

Today, trailers aren’t such an afterthought. Studios spend millions of dollars stirring up anticipation for their big-budget movies by releasing trailers that promise consumers something worth the hassle and expense of a ticket. The responsibility for taking the most dazzling 120-odd seconds from hours of footage and splicing it into a coherent—and compelling—mini-movie falls on trailer editors, who screen films months in advance in order to create previews that will build the viral buzz filmmakers look for.

To better understand the job, mental_floss spoke with several editors at three of the most highly respected firms in the business. Here’s how they get you excited about the next blockbuster.

1. YOU NEED TOP-LEVEL SECURITY CLEARANCE.

If you think studios are worried about rough cuts of their films falling into the wrong hands, you’d be correct. As some of the few pairs of eyes outside of the production to see a movie months before release, trailer houses must make sure their offices can’t be tapped by potential pirates. Ron Beck, the owner and creative director of Tiny Hero, says that only employees at Fort Knox might be able to relate to the level of security that trailer editors deal with. “There are cameras everywhere,” he says. “We have sensors that record everyone who goes in and comes out of a door.” Rough cuts of movies typically get delivered on encrypted hard drives and are edited only on hardware that’s inaccessible to an open network.

“All of [the studios] are careful, but Marvel leads the pack,” Beck says. “Their stuff is super-strong. That’s why you rarely see their movies pirated.”

2. THEY MIGHT BE SEEING AN ENTIRELY DIFFERENT MOVIE THAN YOU DO.

In order to begin work on marketing campaigns, trailer firms are usually given extremely early footage that has yet to be polished and edited. Rough cuts might emphasize plot points or characters that wind up getting minimized by the time the picture is done, or “locked.” David Hughes of the UK-based firm Synchronicity says he’s seen a few movies that he barely recognized once they hit theaters. “Bridget Jones’s Diary was quite dark at one point,” he says, “and I recall a totally different opening to Bowfinger where the film-within-the-film was called Star Wars rather than Chubby Rain because the accountant who wrote it was so stupid he didn’t know a film called Star Wars actually existed.”

Since films continue to get pared down right up until release, it’s also common to see scenes in trailers that don’t ultimately make the final cut. “Dirty Rotten Scoundrels [is] my favorite example, because someone wrote to complain that they had waited the whole film to see Steve Martin push an old lady into a swimming pool, as seen in the trailer, only to find that the scene wasn’t in the finished film.”

3. THEY CAN ASK FOR SPECIAL EFFECTS TO GET PRIORITIZED.

Because editors see films so far in advance, they’re often looking at footage full of green screens and unfinished effects work. But if an editor feels like a scene would bolster the trailer’s impact, they can request the studio fast-track the CGI. “We can’t ask what they shoot first, because productions usually revolve around an actor’s schedule,” Beck says. “But we can ask for visual effects stuff we need to be done first.”

4. THEY MAY CUT A TRAILER YOU NEVER SEE.

Daniel Lee, who spent 10 years at Mark Woollen and Associates before migrating to the buzzed-about firm Project X, says that editors are often called upon by directors or producers to splice together a “sizzle reel” made out of stock or existing footage in order to sell a studio on a movie. “It’s becoming increasingly common to do,” he says. “It’s an inexpensive way to sell someone on the vibe of a movie.” Director Joe Carnahan commissioned a reel when he was looking to direct a theatrical version of Daredevil (above).

5. THEY DON’T LIKE SPOILERS ANY MORE THAN YOU DO.

For last summer’s Terminator: Genisys, fans who viewed the trailer were slightly annoyed to learn—spoiler—that perpetual victim John Connor was a Terminator in yet another revision of the franchise’s confusing canon. But those edicts usually come down from the studio, according to Beck. “I like to tease, not tell,” he says. “In certain movies, though, you have to give it up, or the trailer won’t even be good. Revealing a twist is ultimately the studio’s decision, though.”

6. THE 2003 TEXAS CHAINSAW MASSACRE REMAKE REWROTE THE RULES.

Trailers are often the result of other trailers that studios noticed were particularly effective in engaging an audience emotionally. One example: the preview for 2003’s Texas Chainsaw Massacre remake. “The one that always comes to mind is the trailer for the Michael Bay-produced remake of The Texas Chainsaw Massacre, where black frames were inserted off the beat to disorienting effect,” Hughes says. “This technique has been borrowed for many horror trailers since, including some that we’ve made.”

Another trend-making trailer: the one for 2010’s Inception, with its thunderous “braam” sounds that seemed to influence every heavy action/drama film that followed.

7. THEY CAN’T HAVE PEOPLE POINTING GUNS AT OTHER PEOPLE.

Because trailer content is subject to many of the same ratings restrictions as the feature film itself, editors often have to cut around some of the Motion Picture Association of America (MPAA) mandates. If a trailer is a “green band,” or suitable for general audiences, that means no threatening people with firearms. “There’s a lot of minutiae, like where a gun can be pointed,” Beck says. “You can’t have someone pointing it straight at the camera, for example, or at anyone in the same frame. Sometimes we blow up [zoom] a frame to hide stuff like that.”

8. TRAILERS GET FOCUS GROUPS.

Studios looking to reach the widest possible audience sometimes like to hedge their bets on campaigns and enlist two different trailer vendors to create edits for the project. They’ll focus-test each and back the one with the most support. That’s not unusual, but what irks editors, Lee says, is when a studio’s marketing department decides to split the difference and create a trailer based on ideas from two different creative entities. “They might combine trailers,” he says. “We call that Frankensteining.”

9. THEY CAN GET ACTORS TO SAY ANYTHING.

Because editors have precious little time to communicate the theme or premise of a movie, having a line or two of dialogue that summarizes a character’s motivation can make all the difference. Unfortunately, not all movies come stocked with exposition. If a trailer needs a clarifying line and the actor isn’t available to record dialogue, Beck can go in and splice together sentences from words he’s already said. “We might use a sound-alike actor, or we might see if we can form whatever sentence with the lines we have. We could make ‘I need to find her’ from someone saying ‘Find her’ and ‘Need to.’”

If all else fails and an actor is needed, Hughes says there’s one relatively quick fix. “If you’ve seen a film in the last five years, you’ve probably seen a film in which at least one line of ADR [Additional Dialogue Recording] was done on an iPhone after the actor had left the set.”

10. THEY LIKE TO LEAVE PRIVATE EASTER EGGS IN TRAILERS.

Studios love when fans of film franchises dissect trailers to spot hidden references or clues. So do editors, but sometimes the Easter eggs they drop in are going to be hard for anyone outside of their family to catch. “I know a few editors, myself included, who try to slip in their voice in a piece,” Lee says. “That’s only if you have enough time to fiddle with it.” Lee’s two kids lent their voices to a sound mix for World of Warcraft: Looking for Group, a documentary about the game. “I don’t know if they made the final cut, but they’re in there.”

11. COMEDIES ARE HARD.

Of all the film genres he’s overseen, Hughes believes comedies that don’t hit the mark are his worst assignment. “I’ve made trailers for comedies where there were literally not enough jokes in the film to fill a trailer,” he says. “Going back in the mists of time, I remember the trailer for Beverly Hills Cop III having one joke in it, Serge saying something sarcastic about Axel Foley’s shoes, and then they cut that joke out of the film.”

12. SOMETIMES YOUTUBE AMATEURS CAN BREAK IN.

Fall down the YouTube rabbit hole and you’ll find thousands of movie trailers cobbled together by hobbyists outside of the industry. While many might underestimate the work and craft involved in doing it professionally, a few have been able to use it as a launching pad to get noticed. “I know one or two editors who got careers because of their YouTube channels, where they were uploading stuff completely as a hobby,” Lee says.

13. THEY LISTEN TO A LOT OF MUSIC—SOME OF IT UNRELEASED.

Beck believes the majority of a trailer’s impact can be chalked up to how the images fit with the music selection. “Music is at least 50 percent of any trailer,” he says. With access to unreleased tracks from music labels, Beck will go jogging with his earphones in to sample tunes, even though he might not find a perfect visual fit for a song for months. “I’ll picture a scene and maybe see something like it a year or so later. And then I’ll go, ‘Oh, I’ve got just the song for this.’”

14. RYAN GOSLING AND MORGAN FREEMAN ARE TRAILER GOLD.

Ever since voiceovers for trailers largely went out of style, editors have needed to keep viewers oriented in other ways. But that doesn’t mean they can’t cheat a little. Beck says that editing a trailer for anything containing Morgan Freeman is like having a narrator. “We did Now You See Me 2 recently, and when I knew we had Morgan Freeman in the movie, I knew the whole trailer was going to be driven by him saying his lines. He’s like the voice of God.”

Another go-to performer: Ryan Gosling. Why? “He just nails it,” Beck says. “He can convey a meaning or moment so quickly that you can use it in the trailer. You’re trying to do so much in a short amount of time, and when an actor is emotive, it makes my job easier.”

Oscar-nominated editors clear up the biggest category misconception

Some great insights on what an editor does, By Mandi Bierly, EW.com.

Leading up to Sunday’s Oscars, EW.com will take a closer look at four categories that moviegoers may mistakenly think of as “technical.” First up: Film Editing, with insights from Life of Pi’s Tim Squyres, Silver Linings Playbook’s Jay Cassidy and Crispin Struthers, and Zero Dark Thirty’s Dylan Tichenor and William Goldenberg, the latter of whom also cut Argo, making him one of only a handful of editors in Oscar history to compete with himself.

THE KULESHOV EFFECT: UNDERSTANDING VIDEO EDITING’S MOST POWERFUL TOOL

The Kuleshov Effect influences every film and every filmmaker. Understanding it can give insight to “movie magic” and creating the meaning you want expressed in your project.

The Kuleshov Effect is the single most important concept to editing, if not to filmmaking itself. It’s a cornerstone of visual storytelling; through this phenomenon that we can suggest meaning and manipulate space, as well as time. It is a fundamental aspect of “movie magic,” one which every filmmaker needs to understand.

Kuleshov and Film Theory

Lev Kuleshov (1899-1970), was a Russian filmmaker, considered by some to be the first film theorist due to his work dating to the 1910s. Kuleshov asked the question: what made cinema a distinct art, separate from photography, literature or theatre? He found that any form of art consists of two things, the material itself and the way in which the material is organized. Following this logic, Kuleshov found that the organization of individual shots, also known as montage, is what makes film stand apart.

In 1921, Kuleshov set up a series of cinematic demonstrations which gave the phenomenon its name. In these experiments, he projected the face of a well-known actor, then cut to a plate of soup, he then showed another shot of the same actor, then a girl in a coffin, the final sequence was the actor’s face, then an attractive young woman. Audiences responded that the actor seemed in the first sequence to be hungry, in the second, quite mournful and finally seemed to exude lust. In reality, all three shots of the actor were the exact same, his face was interpreted differently based on what it was put next to in the edit. Additionally, even though there was no establishing shot of the actor together with objects from the other shots, they seemed to the audience to be in close proximity to one another. Through the ordering of the shots, two separate places seemed to be one whole continuous location to the audience. Manipulating space and time was possible through the use of editing. This was a huge moment for cinema, with Kuleshov declaring montage to be the central principle that defines film as an art on its own.

This was a huge moment for cinema, with Kuleshov declaring montage to be the central principle that defines film as an art on its own.

Kuleshov’s theories were instrumental in the creation of a powerful genre of filmmaking, Soviet Montage, which was eventually suppressed under Stalin. But the Kuleshov Effect lives on, exemplified in almost every film or video that we encounter.

Understanding the Kuleshov Effect allows editors to better control the tone and meaning found in their films. Through the choices in how shots are organized and sequenced, filmmakers can create new meaning by juxtaposing unrelated images. With the illusion of condensing space, we are able to create new worlds, connecting places that were previously separate. Thus, the Kuleshov Effect is a huge part of the magic that is film.

Sidebar

Russian film theorists in the early 1900s were hugely influential in shaping how cinema was to develop. They saw film as a powerful tool of social transformation, inherently political and inextricably linked to the filmmakers’ worldview. Kuleshov’s contemporaries explored the power of montage and their innovations paved the way for contemporary filmmakers.

Sergei Eisenstein, promoted the idea that the essential element of all art is conflict. Eisenstein advocated dialectic montage — that a sequence of shots can have more meaning the the sum of its individual parts. He was inspired by his study of Japanese Kanji which juxtaposed two concepts to create a new third concept. Eisenstein’s films “Battleship Potemkin” (1925) and “Strike” (1925) are both classics of Russian cinema.

Dziga Vertov eschewed dramatic films as a corrupting influence. An early experimenter in the realm of documentary, Vertov pioneered many modern staples of filmmaking in his newsreels. In 2014, “Sight and Sound” named his film, “Man with a Movie Camera” (1929), the best documentary ever.

Taken from an article written by Erik Fritts for videomaker magazine.

https://www.videomaker.com/article/c10/18236-the-kuleshov-effect-understanding-video-editing%E2%80%99s-most-powerful-tool

Tags: Editor, Los Angeles, Hollywood.

Recent Comments